Project information

- Category: LLMs (Large Language Models)

- Project date: February, 2025

- Project URL: github.com/rag-search

Using RAG to create external memory for LLM

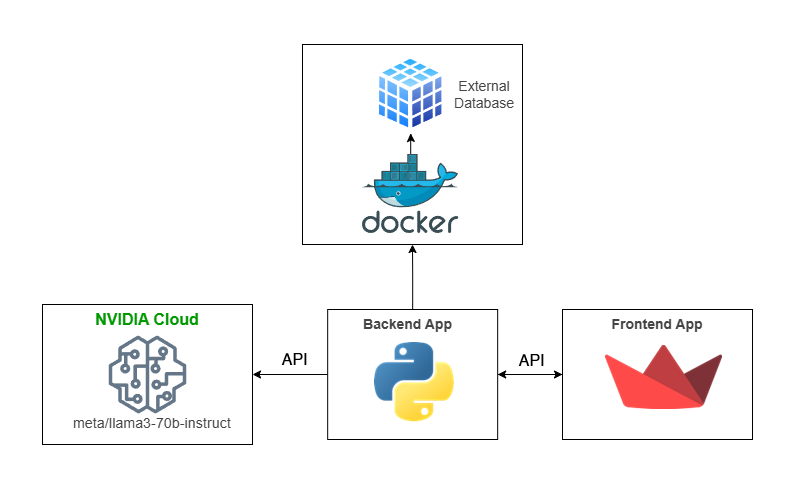

This project enhances an LLM with external memory using RAG techniques. It processes data (txt, pdf, pptx, docx) into a Qdrant vector database, retrieves context for prompts, and interacts with the meta/llama3-70b-instruct model via a FastAPI backend. A Streamlit web app provides a simple interface for queries and responses. Built with Poetry, it extends LLM capabilities with external knowledge.